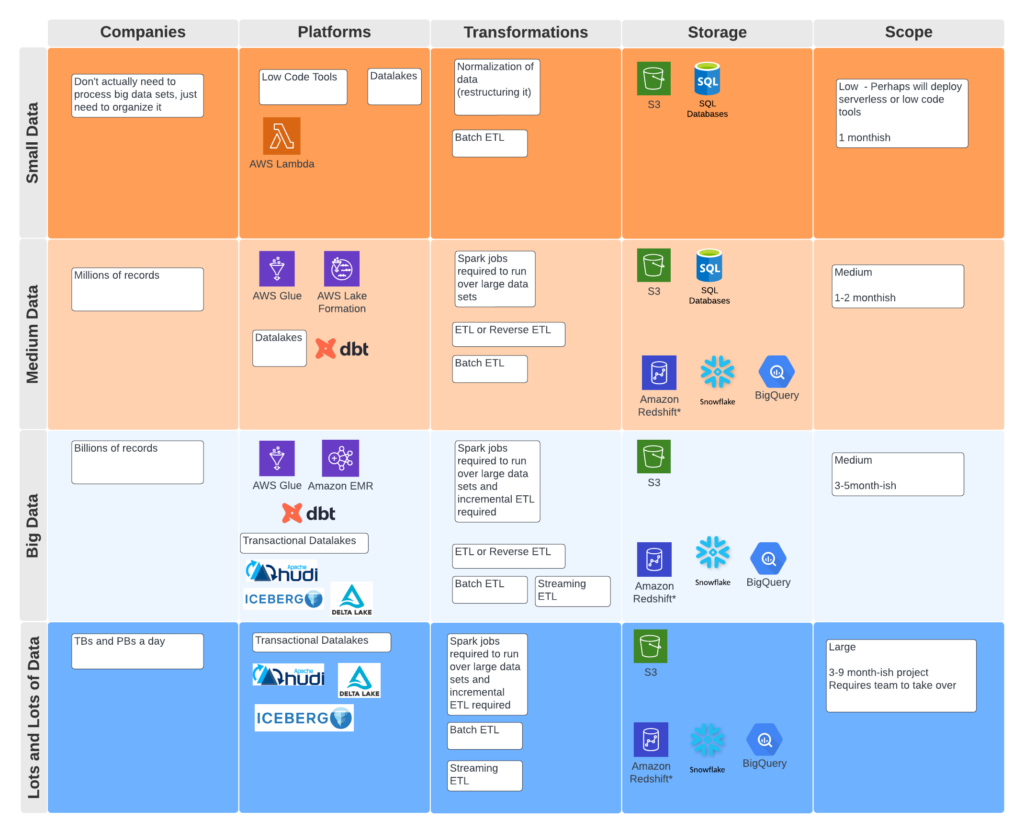

In the data engineering space we have seen quite a few low code and no code tools pass through our radar. Low code tools have their own nuances as you will get to operationalize quicker, but the minute you need to customize something outside of the toolbox, you may run into problems. That’s when we usually deploy our custom development using things like Glue, EMR, or even transactional datalakes depending on your requirements.

This list is split into open source, ELT (reverse ETL), streaming, popular tools, and the rest of the tools. In the space, one thing I have been looking for is a first class open source product. I know that many of these products start as open source and end up releasing a managed version of the product. Personally of course I am all in for open source teams to make back their money somehow, but it would be ideal to have the platforms still contain an open source license.

One thing my team has been noticing is the traction dbt has been gaining in the market. It flips the paradigm a bit doing ELT (Extract Load Transform – reverse ETL), where everything is loaded to your data warehouse first then you start doing transformations on it.

Another project I have been watching with Zach Wilson’s recommendation is mage.ai. It is a pretty spiffy way of creating quick DAGs with executable Python notebooks. The platform is pretty active soliciting feedback on Slack and is one to watch for the future. Airbyte and Meltano are newer to me and I hope to take some time to play with those tools. This list is by no means the most exhaustive, but let me know if there is anything I have missed.

Opensource Tools

Product: Airbyte

Description: Airbyte is an open-source data integration platform that allows users to replicate data from various sources and load it into different destinations. Its features include real-time data sync, robust data transformations, and automatic schema migrations.

Link: https://airbyte.io/

Github Link: https://github.com/airbytehq/airbyte

Cost: Free, with paid plans available

Release Date: 2020

Number of Employees: 11-50

Product: mage.ai

Description: mage.ai is a no-code AI platform that enables businesses to automate and optimize workflows. It includes features such as visual recognition, natural language processing, and predictive analytics, with a focus on e-commerce applications.

Link: https://mage.ai/

Github Link: https://github.com/mage-ai

Cost: Open source

Release Date: 2020

Number of Employees: 11-50

Product: Meltano

Description: Meltano is an open-source data integration tool that allows users to build, run, and manage data pipelines using YAML configuration files. Its features include source and destination connectors, transformations, and orchestration.

Link: https://meltano.com/

Github Link: https://github.com/meltano/meltano

Cost: Free, with paid options available

Release Date: 2020

Number of Employees: 11-50

Product: Apache Nifi

Description: Apache Nifi is a web-based dataflow system that allows users to automate the flow of data between systems. Its features include a drag-and-drop user interface, data provenance, and support for various data sources and destinations.

Link: https://nifi.apache.org/

Github Link: https://github.com/apache/nifi

Cost: Free

Release Date: 2014

Number of Employees: N/A

Product: Apache Beam

Description: Apache Beam is an open-source, unified programming model for batch and streaming data processing. It provides a simple, portable API for defining and executing data processing pipelines, with support for various execution engines.

Link: https://beam.apache.org/

Github Link: https://github.com/apache/beam

Cost: Free

Release Date: N/A

Number of Employees: N/A

ELT

Product: dbt (data build tool)

Description: dbt is an open-source data transformation and modeling tool that enables analysts and engineers to transform their data into actionable insights. It provides a simple, modular way to manage data transformation pipelines in SQL, with features such as version control, documentation generation, and testing.

Link: https://www.getdbt.com/

Github Link: https://github.com/dbt-labs/dbt

Cost: Free, with paid options available for enterprise features and support

Release Date: 2016

Number of Employees: 51-200

Streaming

Product: Confluent

Description: Confluent is a cloud-native event streaming platform based on Apache Kafka that enables organizations to process, analyze, and respond to data in real-time. It provides a unified platform for building event-driven applications, with features such as data integration, event processing, and management tools.

Link: https://www.confluent.io/

Github Link: https://github.com/confluentinc

Cost: Free, with paid options available for enterprise features and support

Release Date: 2014

Number of Employees: 1001-5000

Popular Tools

Product: Fivetran

Description: Fivetran is a cloud-based data integration platform that automates the process of data pipeline building and maintenance. It provides pre-built connectors for over 150 data sources and destinations, with features such as data synchronization, transformation, and monitoring.

Link: https://fivetran.com/

Github Link: https://github.com/fivetran

Cost: Subscription-based, with a free trial available

Release Date: 2012

Number of Employees: 501-1000

Product: Alteryx

Description: Alteryx is an end-to-end analytics platform that enables users to perform data blending, advanced analytics, and machine learning tasks. It provides a drag-and-drop interface for building and deploying analytics workflows, with features such as data profiling, data quality, and data governance.

Link: https://www.alteryx.com/

Github Link: https://github.com/alteryx

Cost: Subscription-based, with a free trial available

Release Date: 1997

Number of Employees: 1001-5000

Product: Informatica

Description: Informatica is a data management platform that enables users to integrate, manage, and govern data across various sources and destinations. It provides a unified platform for data integration, quality, and governance, with features such as data profiling, data masking, and data lineage.

Link: https://www.informatica.com/

Github Link: https://github.com/informatica

Cost: Subscription-based, with a free trial available

Release Date: 1993

Number of Employees: 5001-10,000

Product: Matillion

Description: Matillion is a cloud-native ETL platform that enables users to extract, transform, and load data into cloud data warehouses. It provides a visual interface for building and deploying ETL workflows, with features such as data transformation, data quality, and data orchestration.

Link: https://www.matillion.com/

Github Link: https://github.com/matillion

Cost: Subscription-based, with a free trial available

Release Date: 2011

Number of Employees: 501-1000

Orchestration Tools

Sure! Here are the entries for Prefect, Dagster, Airflow, Azkaban, Luigi, and Oozie:

Product: Prefect

Description: Prefect is a modern data workflow orchestration platform that enables users to automate their data pipelines with Python. It provides a simple, Pythonic interface for defining and executing workflows, with features such as distributed execution, versioning, and monitoring.

Link: https://www.prefect.io/

Github Link: https://github.com/PrefectHQ/prefect

Cost: Free, with paid options available for enterprise features and support

Release Date: 2018

Number of Employees: 51-200

Product: Dagster

Description: Dagster is a data orchestrator and data integration testing tool that enables users to build and deploy reliable data pipelines. It provides a Python-based API for defining and executing pipelines, with features such as type-checking, validation, and monitoring.

Link: https://dagster.io/

Github Link: https://github.com/dagster-io/dagster

Cost: Free, with paid options available for enterprise features and support

Release Date: 2019

Number of Employees: 11-50

Product: Airflow

Description: Airflow is an open-source platform for creating, scheduling, and monitoring data workflows. It provides a Python-based API for defining and executing workflows, with features such as task dependencies, retries, and alerts.

Link: https://airflow.apache.org/

Github Link: https://github.com/apache/airflow

Cost: Free

Release Date: 2015

Number of Employees: N/A (maintained by the Apache Software Foundation)

Product: Azkaban

Description: Azkaban is an open-source workflow manager that enables users to create and run workflows on Hadoop. It provides a web-based interface for creating and scheduling workflows, with features such as task dependencies, notifications, and retries.

Link: https://azkaban.github.io/

Github Link: https://github.com/azkaban/azkaban

Cost: Free

Release Date: 2010

Number of Employees: N/A (maintained by the Azkaban Project)

Product: Luigi

Description: Luigi is an open-source workflow management system that enables users to build complex pipelines of batch jobs. It provides a Python-based API for defining and executing workflows, with features such as task dependencies, retries, and notifications.

Link: https://github.com/spotify/luigi

Github Link: https://github.com/spotify/luigi

Cost: Free

Release Date: 2012

Number of Employees: N/A (maintained by Spotify)

Product: Oozie

Description: Oozie is a workflow scheduler system for managing Hadoop jobs. It provides a web-based interface for defining and scheduling workflows, with features such as task dependencies, triggers, and notifications.

Link: https://oozie.apache.org/

Github Link: https://github.com/apache/oozie

Cost: Free

Release Date: 2009

Number of Employees: N/A (maintained by the Apache Software Foundation)

Tools

3forge – https://3forge.com/ – 3forge delivers software tools for creating financial applications and data delivery platforms.

Ab Initio Software – https://www.abinitio.com/ – Ab Initio Software provides a data integration platform for building large-scale data processing applications.

Adeptia – https://adeptia.com/ – Adeptia offers a cloud-based, self-service integration solution that allows users to easily connect and automate data flows across multiple systems and applications.

Aera – https://www.aeratechnology.com/ – Aera provides an AI-powered platform for enterprises to accelerate their digital transformation by automating and optimizing business processes.

Aiven – https://aiven.io/ – Aiven offers managed cloud services for open-source technologies such as Kafka, Cassandra, and Elasticsearch.

Ascend.io – https://ascend.io/ – Ascend.io provides a unified data platform that allows users to build, scale, and automate data pipelines across various sources and destinations.

Astera Software – https://www.astera.com/ – Astera Software offers a suite of data integration and management tools for businesses of all sizes.

Black Tiger – https://blacktiger.io/ – Black Tiger provides an open-source data pipeline framework that simplifies the process of building and deploying data pipelines.

Bryte Systems – https://www.brytesystems.com/ – Bryte Systems offers an AI-powered data platform that helps organizations manage their data operations more efficiently.

CData Software – https://www.cdata.com/ – CData Software provides a suite of drivers and connectors for integrating with various data sources and APIs.

Census – https://www.getcensus.com/ – Census offers an automated data syncing platform that allows businesses to keep their customer data up-to-date across various systems and applications.

CloverDX – https://www.cloverdx.com/ – CloverDX provides a data integration platform for building and managing complex data transformations.

Data Virtuality – https://www.datavirtuality.com/ – Data Virtuality offers a data integration platform that allows users to connect and query data from various sources using SQL.

Datameer – https://www.datameer.com/ – Datameer provides a data preparation and exploration platform that enables users to analyze large datasets quickly and easily.

DBSync – https://www.mydbsync.com/ – DBSync provides a cloud-based data integration platform for connecting and synchronizing data across various systems and applications.

Denodo – https://www.denodo.com/ – Denodo provides a data virtualization platform that allows users to access and integrate data from various sources in real-time.

Devart – https://www.devart.com/ – Devart offers a suite of database tools and data connectivity solutions for various platforms and technologies.

DQLabs – https://dqlabs.ai/ – DQLabs provides a self-service data management platform that automates the process of discovering, curating, and governing data assets.

eQ Technologic – https://www.eqtechnologic.com/ – eQ Technologic offers a data integration platform that enables users to extract, transform, and load data from various sources.

Equalum – https://equalum.io/ – Equalum provides a real-time data ingestion and processing platform that enables organizations to make data-driven decisions faster.

Etleap – https://etleap.com/ – Etleap offers a cloud-based data integration platform that simplifies the process of building and managing data pipelines.

Etlworks – https://www.etlworks.com/ – Etlworks provides a data integration platform that allows users to create and manage complex data transformations.

Harbr – https://harbr.com/ – Harbr is a data exchange platform that connects and facilitates secure data collaboration between organizations.

HCL Technologies (Actian) – https://www.actian.com/ – Actian provides hybrid cloud data analytics software solutions that enable organizations to extract insights from big data and act on them in real time.

Hevo Data – https://hevodata.com/ – Hevo Data provides a cloud-based data integration platform that enables companies to move data from various sources to a data warehouse or other destination in real time.

Hitachi Vantara – https://www.hitachivantara.com/ – Hitachi Vantara provides data management, analytics, and storage solutions for businesses across various industries.

HULFT – https://www.hulft.com/ – HULFT provides data integration and management solutions that enable businesses to streamline data transfer and reduce data integration costs.

ibi – https://www.ibi.com/ – ibi provides data and analytics software solutions that help organizations make data-driven decisions.

Impetus Technologies – https://www.impetus.com/ – Impetus Technologies provides data engineering and analytics solutions that enable businesses to extract insights from big data.

Infoworks – https://www.infoworks.io/ – Infoworks provides a cloud-native data engineering platform that automates the process of data ingestion, transformation, and orchestration.

insightsoftware – https://insightsoftware.com/ – insightsoftware provides financial reporting and enterprise performance management software solutions that help organizations improve their financial and operational performance.

Integrate.io – https://www.integrate.io/ – Integrate.io provides a cloud-based data integration platform that enables businesses to integrate and manage data from various sources.

Intenda – https://intenda.net/ – Intenda provides a data integration and analytics platform that enables businesses to unlock insights from their data.

IRI – https://www.iri.com/ – IRI provides data management and integration software solutions that enable businesses to integrate and manage data from various sources.

Irion – https://www.irion-edm.com/ – Irion provides a data management and governance platform that enables businesses to automate data quality and compliance processes.

K2view – https://www.k2view.com/ – K2view provides a data fabric platform that enables businesses to connect and manage data across various sources and applications.

Komprise – https://www.komprise.com/ – Komprise provides an intelligent data management platform that enables businesses to manage and optimize data across various storage tiers.

Minitab – https://www.minitab.com/ – Minitab is a statistical software package designed for data analysis and quality improvement.

Nexla – https://www.nexla.com/ – Nexla offers a data operations platform that automates the process of ingesting, transforming, and delivering data to various systems and applications.

OpenText – https://www.opentext.com/ – OpenText is a Canadian company that provides enterprise information management software.

Palantir – https://www.palantir.com/ – Palantir is an American software company that specializes in data analysis.

Precisely – https://www.precisely.com/ – Precisely provides data integrity, data integration, and data quality software solutions.

Primeur – https://www.primeur.com/ – Primeur is an Italian software company that offers products and services for data integration, managed file transfer, and digital transformation.

Progress – https://www.progress.com/ – Progress is an American software company that provides products for application development, data integration, and business intelligence.

PurpleCube – https://www.purplecube.ca/ – PurpleCube is a Canadian consulting company that specializes in data integration, data warehousing, and business intelligence.

Push – https://www.push.tech/ – Push is a French software company that provides products and services for data processing and analysis.

Qlik – https://www.qlik.com/ – Qlik provides business intelligence software that helps organizations visualize and analyze their data.

RELX (Adaptris) – https://www.adaptris.com/ – Adaptris, now a RELX company, offers data integration software that helps organizations connect systems and applications.

Rivery – https://rivery.io/ – Rivery is a cloud-based data integration platform that allows businesses to consolidate, transform, and automate data.

Safe Software – https://www.safe.com/ – Safe Software provides spatial data integration and spatial data transformation software.

Semarchy – https://www.semarchy.com/ – Semarchy provides a master data management platform that helps organizations consolidate and manage their data.

Sesame Software – https://www.sesamesoftware.com/ – Sesame Software offers data management solutions that simplify data integration, data warehousing, and data analytics.

SnapLogic – https://www.snaplogic.com/ – SnapLogic provides a cloud-based integration platform that enables enterprises to connect cloud and on-premise applications and data.

Software AG – https://www.softwareag.com/ – Software AG offers a platform that enables enterprises to integrate and optimize their business processes and systems.

Stone Bond Technologies – https://www.stonebond.com/ – Stone Bond Technologies offers a platform that enables enterprises to integrate data from various sources and systems.

Stratio – https://www.stratio.com/ – Stratio offers a platform that enables enterprises to process and analyze large volumes of data in real-time.

StreamSets – https://streamsets.com/ – StreamSets offers a data operations platform that enables enterprises to ingest, transform, and move data across systems and applications.

Striim – https://www.striim.com/ – Striim offers a real-time data integration and streaming analytics platform that enables enterprises to collect, process, and analyze data in real-time.

Suadeo – https://www.suadeo.com/ – Suadeo provides a platform that enables enterprises to integrate and manage their data from various sources.

Syniti – https://www.syniti.com/ – Syniti offers a data management platform that enables enterprises to integrate, enrich, and govern their data.

Talend – https://www.talend.com/ – Talend provides a cloud-based data integration platform that enables enterprises to connect, cleanse, and transform their data.

Tengu – https://tengu.io/ – Tengu offers a data engineering platform that enables enterprises to automate the process of ingesting, processing, and delivering data.

ThoughtSpot – https://www.thoughtspot.com/ – ThoughtSpot offers a cloud-based platform that enables enterprises to analyze their data in real-time.

TIBCO Software – https://www.tibco.com/ – TIBCO Software offers a platform that enables enterprises to integrate and optimize their business processes and systems.

Tiger Technology – https://www.tiger-technology.com/ – Tiger Technology offers a platform that enables enterprises to manage, move, and share their data across systems and applications.

Timbr.ai – https://timbr.ai/ – Timbr.ai provides a platform that enables enterprises to manage and process their data in real-time.

Upsolver – https://www.upsolver.com/ – Upsolver offers a cloud-native data integration platform that enables enterprises to process and analyze their data in real-time.

WANdisco – https://wandisco.com/ – WANdisco offers a platform that enables enterprises to replicate and migrate their data across hybrid and multi-cloud environments.

ZAP – https://www.zapbi.com/ – ZAP offers a data management platform that enables enterprises to integrate, visualize, and analyze their data.

Domo – https://www.domo.com/ – Domo is a cloud-native platform that gives data-driven teams real-time visibility into all the data and insights needed to drive business forward.

Dell Boomi – https://boomi.com/ – Dell Boomi is a business unit acquired by Dell that specializes in cloud-based integration, API management, and Master Data Management.

Stitch – https://www.stitchdata.com/ – Stitch is a cloud-first, open-source platform for rapidly moving data. It allows users to integrate with over 100 data sources and automate data movement to a cloud data warehouse.

Sparkflows – https://sparkflows.io/ – Sparkflows is a low-code, drag-and-drop platform that enables organizations to build, deploy, and manage Big Data applications on Apache Spark.

Liquibase – https://www.liquibase.com/ – Liquibase is an open-source database-independent library for tracking, managing, and applying database schema changes.

Shipyard – https://shipyardapp.com/ – Shipyard is a container management platform that makes it easy to deploy, manage, and monitor Docker containers.

Flyway – https://flywaydb.org/ – Flyway is an open-source database migration tool that allows developers to evolve their database schema easily and reliably across different environments.